Research Project

3D Brain Tumor

Segmentation

A hybrid quantum-classical self-supervised learning architecture for volumetric medical image analysis, combining contrastive learning with variational quantum circuits — presented at HIMSS 2022.

Overview

Project Summary

This project explored whether variational quantum circuits (VQCs) could meaningfully augment a contrastive self-supervised learning pipeline for 3D brain tumor segmentation on MRI volumes. The architecture was modeled after SimCLR (A Simple Framework for Contrastive Learning of Visual Representations), extended with a quantum ansatz inserted after each CNN encoder arm — creating a hybrid quantum-classical projector head.

The work was motivated by the hypothesis that quantum entanglement and superposition might capture richer feature correlations in the projector head's high-dimensional embedding space, potentially improving downstream segmentation performance when fine-tuned with limited labeled data. The proof-of-concept was presented at the HIMSS international health conference, demonstrating the feasibility of quantum-enhanced medical AI pipelines as a research direction.

Architecture

SimCLR + Quantum Ansatz

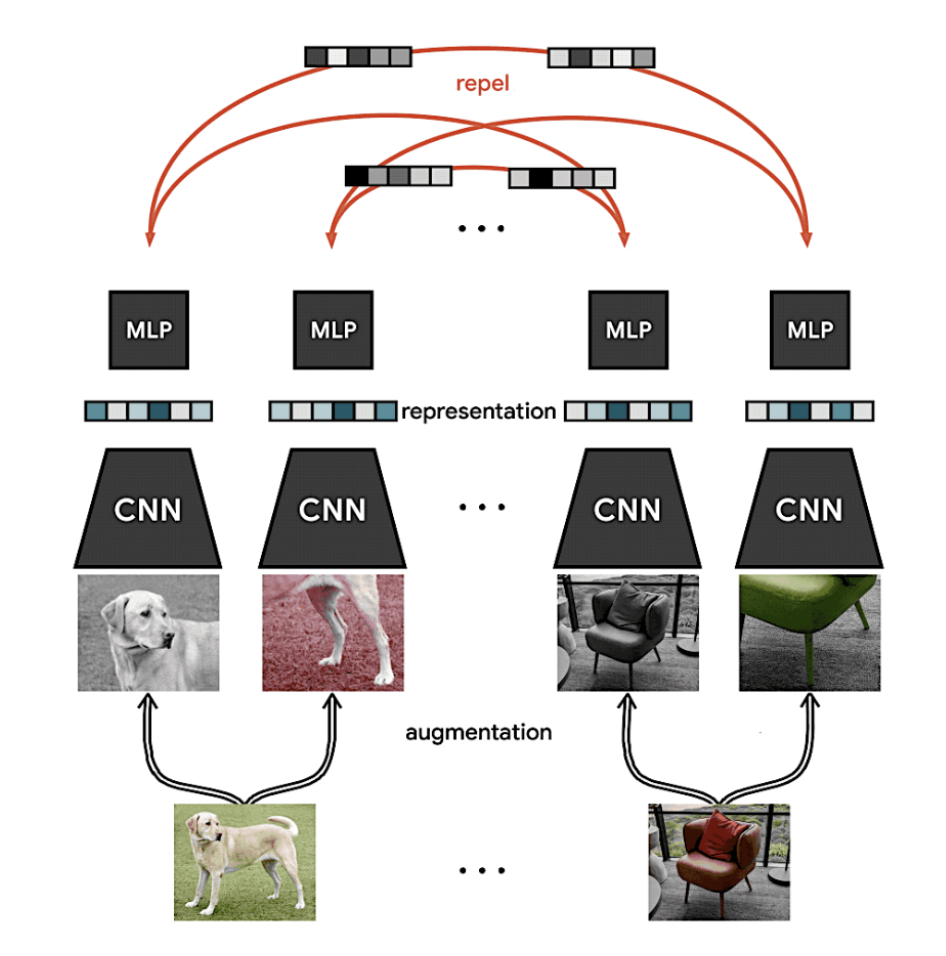

The base framework follows the SimCLR contrastive learning paradigm: two augmented views of the same 3D MRI volume are passed through identical encoder–projector pairs, and representations are trained to be similar for the same volume (positive pair) while being pushed apart from different volumes (negative pairs) via the normalized temperature-scaled cross-entropy (NT-Xent) loss.

The key architectural innovation was the insertion of a variational quantum circuit (VQC) into the projector head of each arm. Rather than a purely classical MLP projector, each arm used an MLP that fed into a parameterized quantum circuit — the quantum ansatz — before producing the final embedding. This hybrid design kept the heavy lifting (3D feature extraction) in the classical CNN encoder while delegating the final representational mapping to a quantum layer.

The encoder backbone was a 3D convolutional neural network (3D CNN) designed to process full volumetric MRI inputs. 3D convolutions capture spatial correlations across the depth axis that 2D slice-by-slice approaches miss, making them better suited to tumor boundary detection and volume estimation tasks. Feature maps were globally average-pooled to produce a fixed-length representation vector before being passed to the projector head.

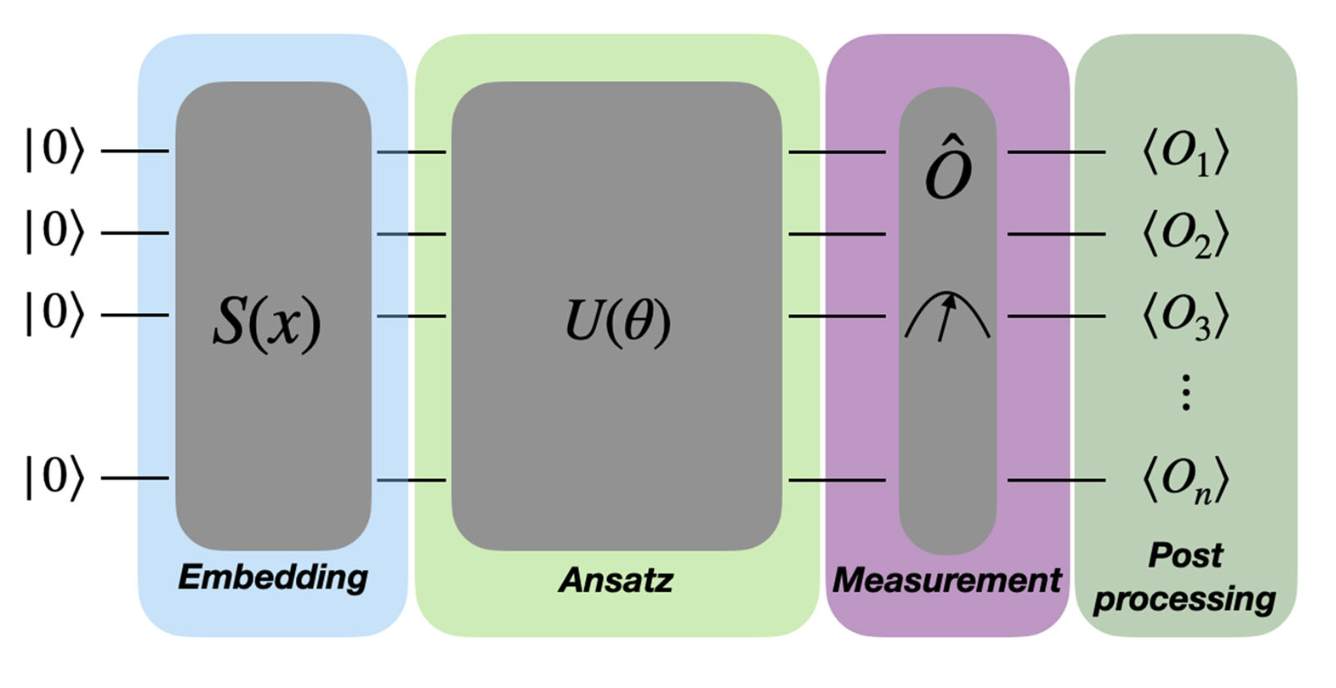

The quantum projector head consisted of a small classical MLP to reduce dimensionality to match the number of available qubits, followed by a variational quantum circuit. The VQC used angle encoding to embed the classical feature vector into qubit rotations, applied layers of parameterized single-qubit rotations and two-qubit entangling gates (the ansatz), and then measured expectation values of Pauli-Z operators to produce the final output embedding. Parameters of the ansatz were trained end-to-end alongside the classical CNN via gradient descent, using the parameter-shift rule to compute quantum gradients.

Results

Findings

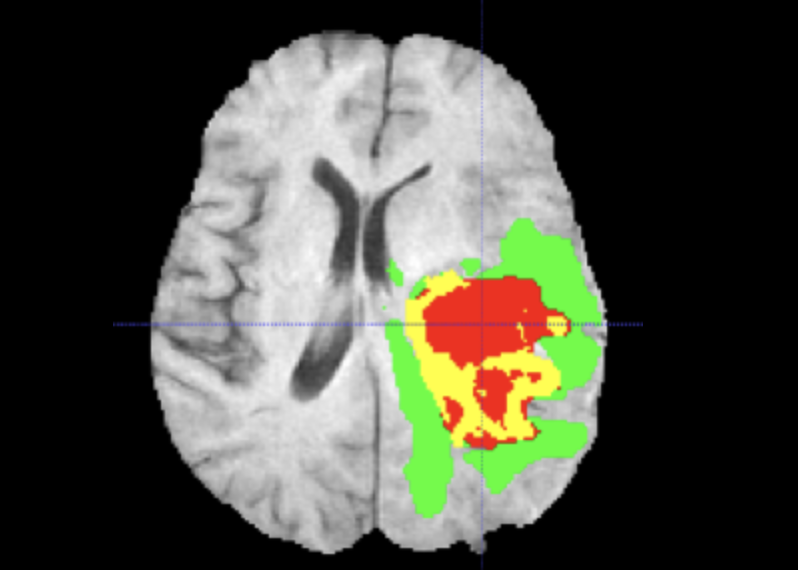

After pre-training with contrastive loss and fine-tuning on a labeled subset of brain tumor MRI volumes, downstream segmentation performance was compared between the classical SimCLR baseline and the quantum-augmented variant. Key metrics included Dice coefficient and Intersection over Union (IoU) on tumor sub-region masks (whole tumor, tumor core, enhancing tumor).

Key Finding — Performance

Downstream segmentation performance did not improve when the VQC projector head replaced the classical MLP projector. Dice scores and IoU metrics were comparable to, or slightly below, the classical baseline across all tumor sub-regions, suggesting the quantum ansatz did not encode more discriminative representations in this setting.

Key Finding — Latency

Circuit simulation introduced significant inference and training latency. Even with a small number of qubits, the overhead of VQC forward passes and gradient estimation via the parameter-shift rule substantially increased wall-clock training time compared to the equivalent classical projector — without a commensurate gain in representation quality.

These results are consistent with the broader consensus in the quantum machine learning community regarding Noisy Intermediate-Scale Quantum (NISQ) devices. NISQ-era hardware is characterized by limited qubit counts, short coherence times, and significant gate error rates. Simulating such circuits classically preserves fidelity but sacrifices the potential speedup; running on real NISQ hardware would introduce additional noise that degrades gradient estimates and embedding quality further.

Discussion

NISQ Limitations & Outlook

The null result is informative. Contrastive self-supervised learning relies on the projector head producing smoothly separable embedding geometries — a property that requires both expressive capacity and gradient-friendly optimization landscapes. NISQ-scale VQCs face a fundamental tension: shallow circuits may be insufficiently expressive, while deeper circuits encounter barren plateaus — exponential vanishing of gradients — that make training intractable.

Additionally, the dimensionality bottleneck imposed by qubit count limits (typically 4–12 qubits in simulation for tractable training) means the quantum layer processes a much lower-dimensional signal than a classical MLP projector could accommodate. Future work might explore fault-tolerant quantum hardware with higher qubit counts and lower error rates, or hybrid architectures that use quantum layers at a coarser granularity (e.g., at the classification head rather than the contrastive projector).

The project nonetheless demonstrates the viability of end-to-end hybrid quantum-classical training on a 3D medical imaging task and provides a reproducible baseline for evaluating quantum enhancements to contrastive learning as quantum hardware matures.

Stack